For allocators navigating AI adoption in diligence workflows

Picture this: a diligence analyst uploads a manager’s DDQ to an AI tool and asks it to summarize the document. Twenty minutes later, a clean, well-structured memo lands in their inbox. The IC loves it. The process feels transformed.

Two weeks later, during a deeper review, someone notices that the manager’s ADV contained a material disclosure that directly contradicted a key claim in the DDQ. The AI never flagged it. Nobody asked it to compare the two documents. The memo went to the IC without that context.

This is not a story about AI failing. It is a story about how AI performs exactly as instructed and how the most consequential mistakes in AI-assisted diligence are almost never about the technology. They are about the process, or the absence of one.

Why This Moment Matters

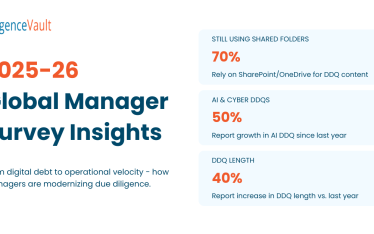

AI adoption among allocators is accelerating across endowments, pension funds, family offices, and institutional platforms. The tools are genuinely powerful. But most teams are adopting AI tactically: one analyst at a time, task by task, without a governing framework. The result is uneven quality, inconsistent outputs, and, in some cases, real risk, and it almost always comes down to the same set of avoidable mistakes.

The 11 Mistakes

1. Treating AI Like a Search Engine and Prompting Like It

Vague inputs produce vague outputs. Asking AI to ‘analyze this DDQ’ is like handing a newly hired analyst a document and walking away. AI performs best when the task, format, scope, and constraints are clearly defined. Most early frustration with AI tools traces back to this single gap.

Aha moment: We have been using a tool capable of structured expert-level analysis the same way we Google something.

Best practice: Build a prompt library. Standardize prompts for common diligence tasks, summarizing risk factors, extracting fee terms, flagging conflicts of interest, and make them available to the whole team. Consistency starts with the prompt, not the output.

2. Not Requiring Citations

If you do not ask for citations, the model will not provide them. It will produce fluent, confident-sounding conclusions without evidence, and those conclusions can be wrong. In a diligence context, where every finding may eventually be scrutinized by an LP, a consultant, or a regulator, untraceable conclusions are a liability.

Aha moment: I have been circulating memos with conclusions I cannot actually trace back to a source.

Best practice: Make citations non-negotiable in every prompt. Template language: ‘For every conclusion you draw, cite the document name, section, and page number.’ If the AI cannot cite it, treat the output as unverified.

3. Providing No Context About Your Mandate

AI has no idea who you are, what you care about, or what risks keep you up at night, unless you tell it. A family office with a concentrated equity book and an endowment with a 20-year horizon should be asking very different questions of the same manager. Without context, the AI gives everyone the same analysis.

Aha moment: The AI does not know we are a liability-driven pension with zero tolerance for duration concentration. Of course, the output felt generic.

Best practice: Build a standing context block, a short paragraph describing your organization, investment mandate, risk priorities, and diligence framework, and prepend it to every substantive prompt. This single habit dramatically improves output relevance.

4. Analyzing One Document in Isolation

Real diligence is comparative. A single document, analyzed in isolation, will always look more coherent than the full picture. Cross-document inconsistencies, between what a manager says in their DDQ versus their ADV, or between their PPM and their most recent investor letter, are among the most valuable signals in manager due diligence. Single-document analysis structurally misses them.

Aha moment: The DDQ said one thing. The ADV said something different. I never asked the AI to compare them.

Best practice: Establish a minimum document set before AI analysis begins: DDQ, ADV, PPM, most recent audited financials, and any ESG disclosures. Run cross-document comparison as a standard step, not an afterthought. The inconsistencies are often where the real diligence lives.

5. Over-Trusting the Output

AI can make reasoning mistakes. It can miss nuance. It can draw plausible-sounding conclusions from incomplete information. These are not rare edge cases; they are predictable features of how large language models work. AI could produce the first-pass analysis. Human judgment should produce the final one.

Aha moment: I sent that memo to the IC, and I could not defend two of the conclusions when they pushed back.

Best practice: Treat AI output as a first draft, not a deliverable. Institute a formal challenge step, a human review specifically looking for reasoning gaps, unsupported conclusions, and missing context, before anything goes upstream. The analyst still owns accountability.

6. No Audit Trail

Allocators operating under regulatory oversight or fiduciary governance need to document how conclusions are reached. If AI played a role in a diligence process, there should be a record of what was asked, what was returned, and what was relied upon. Right now, most teams have no such record.

Aha moment: If asked how we reached that conclusion, we could not show our work. The AI conversation is gone.

Best practice: Log every material AI interaction: the prompt used, the document set, the model, the date, and the analyst. Treat this as part of the diligence file. As regulatory scrutiny of AI-assisted processes increases, and it will, the ability to show your work will matter.

7. Using Unapproved Tools for Confidential Documents

This is the ‘shadow AI’ problem. Analysts are not careless; they are under time pressure and reaching for the fastest tool available. Uploading confidential diligence materials to unapproved platforms can violate data policies, LP confidentiality agreements, and, in some jurisdictions, regulatory requirements. The solution is governance, not prohibition.

Aha moment: I uploaded a confidential DDQ to a free LLM tool without reviewing the data use and security setup. That data may have been used for model training.

Best practice: Publish an acceptable use policy with approved tools clearly listed. Distinguish clearly between tools appropriate for internal, non-sensitive tasks and tools approved for confidential counterparty materials. Review it annually. If you do not give people an approved alternative that actually works, they will use whatever is fastest.

8. Assuming the Document Is Accurate

AI analyzes what is in a document. It has no way of knowing whether the document contains errors, reflects outdated information, or omits material disclosures. A flawless analysis of the wrong document is still the wrong analysis.

Aha moment: The AI analyzed the document perfectly. But the document itself was outdated. That is still my problem.

Best practice: Treat document validation and AI analysis as separate, sequential steps. Before running analysis, verify that documents are current, complete, and sourced directly from the manager or a verified repository. AI cannot tell you that a document is two years old and that there is a new version available.

9. Not Asking What Is Missing

AI can analyze what is present. It cannot independently flag what should have been disclosed but was not. This is a critical gap in manager diligence, where selective disclosure is a known risk. Analysts must still ask probing questions, but a well-designed prompt can help identify where those questions should be directed.

Aha moment: The AI told me everything that was in the document. Nobody told me what was not in it, and that is where the risk was.

Best practice: Run a disclosure completeness prompt as a standard step. Ask the AI: ‘For a document of this type, what topics and disclosures would you typically expect to see? What appears to be absent from this document?’ This surfaces omissions that a summary-focused prompt will never catch.

10. Not Iterating

High-performing AI users iterate. The best results almost always come after two or three prompt refinements, adding context, tightening the task, or requesting a different format. When teams do not standardize this habit, AI quality becomes a function of individual skill rather than team process.

Aha moment: My first prompt gave me a mediocre answer, and I accepted it. The analyst next to me ran three iterations and got something IC-ready.

Best practice:Build iteration into the workflow, not the individual. Define minimum iteration standards for different task types. A DDQ summary might require two passes; a red flag analysis might require three. Do not let output quality be a function of who happens to be more persistent on a given day.

11. No Systematic Workflow

Most teams are using AI ad hoc: one task here, one document there, with no consistent framework. The result is that AI quality becomes entirely dependent on individual skill. The teams creating durable competitive advantage are those who have asked a harder question: not ‘how do we use AI on this task?’ but ‘where does AI belong in our diligence process, and what does good look like at each stage?’

Aha moment: We are using AI everywhere and nowhere. Every analyst does something different, and we cannot quality-control any of it.

Best practice: Map your diligence process end-to-end. Assign AI a defined role at each stage. Treat it like any other tool in your technology stack: governed, documented, and trained on. The goal is not to constrain how people use AI; it is to ensure the floor of quality is high regardless of who is running the process.

How to Build a Culture Where AI Is Used Well

Knowing the mistakes is the first step. The second is building an environment where those mistakes become hard to make.

Early wins matter more than big wins. Pick one narrow, high-friction task such as DDQ summarization, fee term extraction, or red flag identification, and make AI dramatically better at it. One analyst saving two hours on a routine task becomes the internal case study that changes behavior more effectively than any mandate.

Peer demonstration beats training. Seeing a colleague produce an IC-ready first draft in twenty minutes is more persuasive than any lunch-and-learn. Create visible moments where AI-assisted work speaks for itself.

Remove friction from starting. If the prompt library is a shared document one click away, people use it. If they have to figure out prompting from scratch every time, many will not bother.

Normalize iteration publicly. If senior team members are visibly using and refining AI tools, it signals that imperfect first outputs are expected and that iteration is the process, not a sign of failure.

DIY Tools vs. Purpose-Built Platforms: A Decision Framework

Not all AI tools are appropriate for all tasks. Getting this wrong is one of the most common and consequential mistakes allocator teams make.

Use DIY tools (CoPilot, Claude, ChatGPT, Gemini) when: the task is internal and non-sensitive; you need open-ended reasoning or creative drafting; the document set is small enough to paste directly; speed matters more than auditability; or the task is genuinely one-off and not part of a repeatable workflow.

Use purpose-built platforms when: you are working with confidential documents (DDQs, ADVs, PPMs, audits); you need cross-document analysis at scale; auditability is required; the task is repeatable and needs consistent quality across analysts; you need structured data outputs rather than prose summaries; or workflow integration matters

Think of it this way: DIY AI is a power tool. Purpose-built AI is a production line. A power tool is flexible and fast, great in skilled hands for the right job. A production line is consistent, governed, and auditable; it produces the same quality regardless of who is running it.

Most allocator teams need both. The firms that use them well are the ones who are deliberate about which belongs where and who have built governance around that decision rather than leaving it to chance

The Throughline

Every mistake on this list shares a root cause: teams adopting AI tactically without thinking about it as a governed process. The prompting is inconsistent. The audit trail is absent. The workflow is undefined. The tool choice is unmanaged.

The firms that will build a durable competitive advantage through AI are not necessarily the ones with access to better models. They are the ones who have asked the harder organizational question: how do we use AI in a way that is consistent, auditable, and genuinely embedded in how we work, not just in how individual analysts work on their best days?

AI will dramatically accelerate diligence workflows. But it works best when paired with disciplined human oversight, a clear process, and the institutional maturity to know the difference between a powerful first draft and a finished conclusion.

The technology is ready. The question is whether the process is.

DiligenceVault

Fund network and purpose-built AI for investment diligence.