The broad promise of AI is that it will allow humans to make exponentially faster decisions and invoke deeper analytics. Let’s explore what the future looks like once you deliver on the promise of AI for digitization. Consider the questions below which represent questions that users in the DiligenceVault community are looking to answer:

- What is the source of the real estate manager’s outperformance: market cycle shifts, cap rate compression or manager skill?

- Is our emerging manager program delivering the expected returns?

- How quickly can we assess ESG characteristics of our external mandates?

- How can we readily identify early warnings or blind spots around significant changes in the profile of a complex asset manager?

- What factors are driving the returns in our private markets portfolio: public market multiples vs. leverage vs. operational efficiency?

In theory, the above questions seem simple enough to answer, as all this information is available to investors researching their investment opportunities. However, in practice, this is near impossible. Valuable insights depend on high-quality data. But a lot of data comes from sources external to the investment offices and is available in fragmented channels, so there is limited ability to control it. Today, most firms are spending more time collecting and preparing high-quality data for analytics than they are on the actual analysis of the data.

Next, as the volume, variation, and speed of such data increases, the potential of data applications to drive superior investment decisions also increases. However, the great potential is counterbalanced by significant challenges in classifying, extracting and analyzing these data sets. A digitization solution is required that harnesses the power of information in a systematic way.

Two Shades of Digitization

There are two methods, (a) digitization at the source that delivers precision, (b) use of natural language processing (NLP) – a segment of AI which understands the intent of human language as it’s written or spoken – to classify, extract and analyze text information. Here delivering accuracy is paramount.

Digitization at Source: Digitization at source requires behavioral change more than anything. We have implemented this functionality at scale for our users, where they are converting complex diligence and data requests across investments, tax, compliance, risk and operational areas from Excel and Word into structured data sets and time series. Once implemented, it delivers results which offer a high degree of precision at a much lower cost, as both the creators of the information and the consumers of it share the cost. Rather than working on training algorithms to replace repetitive human behavior, we focus on the data flows that challenge and disintermediate historical behavior. One of the key views in the 2019 investment industry overview by Oliver Wyman resonates with us tremendously. The report contends that:

Instead of pushing the boundaries of analytical sophistication, asset management firms will take more of a “back to basics” approach, re-focusing their efforts on detailing clear uses, getting better business engagement, updating core workflows and data models, and designing solutions for front line users, not for data engineers.

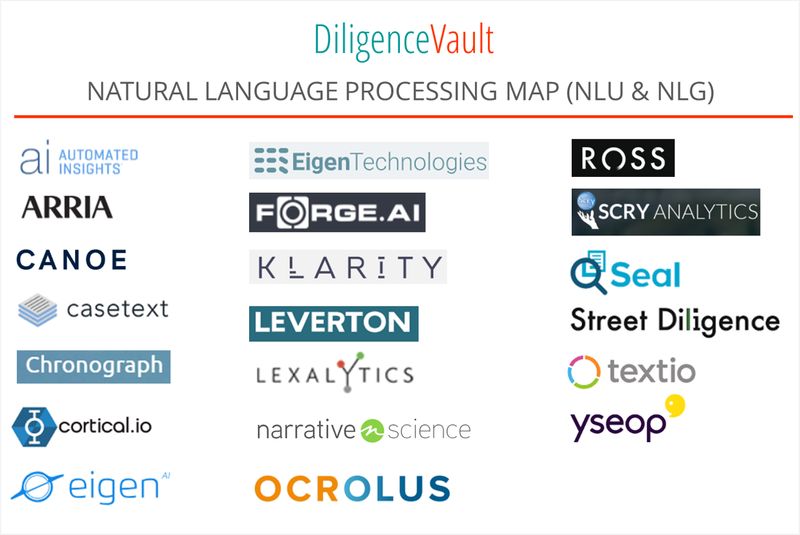

Destination Add-On: While digitization at source is the most under-utilized of the transformative and high impact methodology, not all information can be easily digitized at source in the immediate future. This is where the promise of AI must deliver via NLP and machine learning algorithms. Implementation will succeed first with digitization, next with determining the relevance, and finally with repacking relevant information. In the map below we have identified innovation partners who help deliver on the promise of NLP, both in natural language understanding (NLU) and natural language generation (NLG).

Building the Partnership

How do you decide whom to partner with? We go back to six fundamental questions that help us in making this decision:

1. What type of AI is right for your use case – general intelligence or domain-specific?

2. What types of information sources are is being digitized: structured, semi-structured or unstructured – are these corporate and legal documents, bank statements, tax documents, or industry-specific reports?

3. How stable is the information – are the sources of information expected to be static, or would the styles, format, and content evolve over time?

4. What training data sets do you have available?

5. What should be the operational flow – do you use cloud API or on-prem hosting?

6. What is the key bottleneck in your current process where AI can have the biggest impact – information classification, information extraction, information validation, and normalization, or information analysis?

Keeping Ahead of the Promise of AI

As digitally advanced users execute their strategy, it is important to take a step back and define an execution plan which includes both source and destination to realize the full scale and promise of usable data in investment management.